GYAT Requisite

This is the first of three pages about the the control of social behavior. It is provided to help you warm up to the subject of “control,” especially the control of the behavior of organized humanity. Nothing could be more important to the survival of our species. Nothing.

Through no fault of yours, it turns out that the science and engineering of control plays a central role in organizational behavior, because it demonstrably plays a central role in your social behavior. The reason control theory is ignored by the social sciences and the Establishment is two fold.

- The mathematic physics of control, especially for complicated systems like humans, is advanced mathematically and counterintuitive.

- The truth about the relationship of control theory to social behavior is a menace to the delusion of authoritarian infallibility.

The connection between the mathematical physics of control and social behavior is profoundly strong. It is counterproductive to ascribe human behavior to personality when the primary causation is nature’s laws in force from the Big Bang. The false assumption prevents a practical understanding of social behavior, leading only to cul de sacs of thought. It is our duty to the keystone to provide him with enough technical understanding of control so he can avoid the terrible consequences of false assumptions. about social behavior To that end we have the works of Starkermann, Forrester, Ashby, and William Powers to inform and guide us.

The platform of the control of system dynamics, the knowledge that eliminates system performance uncertainty, was expressed by Lord Kelvin in the late 19th century.

Can you measure it? Can you express it in figures? Can you make a model of it? If not, your theory is apt to be based more upon imagination than upon knowledge. When you can measure what you are speaking about, and express it in numbers, you know something about it; but when you cannot measure it, when you cannot express it in numbers, your knowledge is of a meagre and unsatisfactory kind.

Since if you cannot measure it, you cannot improve it, I am never content until I have constructed a dynamic model of the system I am studying. If I succeed in making one, I understand, otherwise I do not. Lord Kelvin (1887)

The pioneer of top-down social system dynamics is Jay Wright Forrester. His overview of his experience (1975) with urban society is so on-target, it is provided on this website, intact. There is nothing we have today to alter or improve upon his assessment. It is considered required reading. An excerpt:

Our social systems belong to the class called multi-loop nonlinear feedback systems. In the long history of evolution it has not been necessary for man to understand these systems until very recent historical times. Evolutionary processes have not given us the mental skill needed to properly interpret the dynamic behavior of the systems of which we have now become a part.

In addition, the social sciences have fallen into some mistaken “scientific” practices which compound man’s natural shortcomings. Computers are often being used for what the computer does poorly and the human mind does well. At the same time the human mind is being used for what the human mind does poorly and the computer does well. Even worse, impossible tasks are attempted while achievable and important goals are ignored.

Until recently there has been no way to estimate the behavior of social systems except by contemplation, discussion, argument, and guesswork. To point a way out of our present dilemma about social systems, I will sketch an approach that combines the strength of the human mind and the strength of today’s computers.

The concepts of feedback system behavior apply sweepingly from physical systems through social systems. The ideas were first developed and applied to engineering systems. They have now reached practical usefulness in major aspects of our social systems.

I am speaking of what has come to be called industrial dynamics. The name is a misnomer because the methods apply to complex systems regardless of the field in which they are located. A more appropriate name would be system dynamics.

Plan B mentor

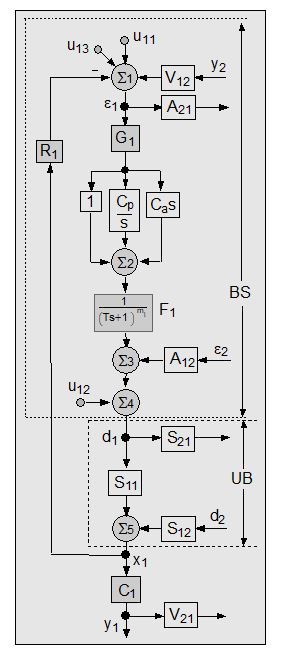

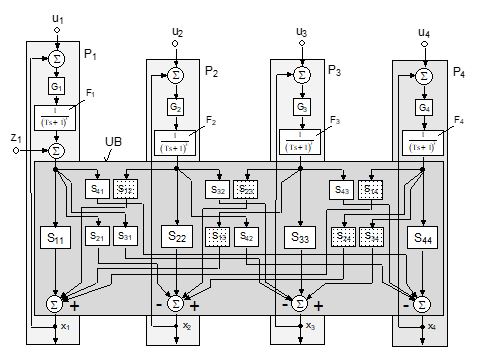

The pioneer of bottom-up social system dynamics, combinations from his model of the individual, is Rudolf Starkermann, a noted professor of control engineering. He was our chief critic and close advisor for thirty years. He did dynamical studies to falsify our work on Plan B, culminating in his 2009 book on hierarchy available on Amazon.

Since top-down failed for Forrester for fifty years straight, we relied upon Starkermann’s bottom-up models to help get to Plan B. Since all of Starkermann’s models are directly connected to control theory, his work leaves nothing to debate, to opinion. His work on social system stability limits is exact and incontrovertible. We have no instance of a Starkermann finding that didn’t work in practice.

When you use modular modeling software for proving-ground your system, which is based on mathematical physics, your assumptions have to be explicit. Opinions don’t cut it. If your build is logically inconsistent with control theory, the program will not compile. Frustration with the computer when that occurs is wasted energy. Much is learned in building the model about system configuration and parameterization. Today modular modelling programs can be used to simulate just about any kind of system and expose it to just about any kind of event.

Some system stability factors:

- Corruption

- Turnover

- Connectance

- Gain

- Synchronization

- Opacity, GIGO

Statics alone cannot derive operational uncertainties. The parts list and architectural drawings point nowhere. You can think up uncertainties anytime, of course, but that doesn’t count towards uncertainty elimination. Only performance of the “solution” settles the matter.

The study of system dynamics, not its static configuration, is the means by which uncertainty in system performance is eliminated before the operating phase of the system begins. Such studies are done with dynamic simulations of system behavior.

The essential purpose of the dynamics phase of GYAT is to illuminate the uncertainties in system performance, before release to operations, by proving ground test results. Dealing with system dynamics requires a skill set and perspective different from what works for The Front End. As Forrester noted in 1970, people are not socialized to think system and feedback dynamics. None of the steps in flow can be left out, which is why the progression of task action is called flow.

For each step in GYAT Flow – examine:

- Does it require the upstream steps to be done first?

- Is it critical to support the next step?

- If this step is left out, inviting GIGO, does it portend regrettable choices?

Task Flow continuation

- Review input from TFE

- Starter set of uncertainties from DBE, FMEA-class studies, reliability/availability.

- Provide opportunities for stakeholders to input their uncertainties into the mix.

- Forecast update on the social context going forward.

- Compare as-is to that possible

- Formulate initial dynamics study-of-uncertainties plan

- Configure and parameterize the simulation using the modular-modelling blocks and connectors provided in the selected software package. This is a difficult, error-prone, time-consuming task, even for experienced simulationists. The compiler acts as a validation of a logical model. Modules constructed on mathematical physics are generic. When you introduce your configuration and parameterization data, if it doesn’t make logical sense to mathematical physics, the program won’t compile.

- When initially compiled, validate model with comparable system performance data, which must be obtained. It is vital to check the model against data from reality.

- Start down the dynamics run plan. The results will lead to plan revisions. Simulation runs can be done so fast, all manner of variations can be studied. Determine:

- The significant variables, dependent and independent

- Stability limits:

- Gain

- Synchronization

- Connectance

- From dynamic simulation build, capture the system characteristic equation for use in feedforward control in process operations

- Falsify model: run installed system with feedforward control over items on the proving ground agenda that are practical.

Liftoff phenomena

The climax of GYAT Flow is the dynamic simulation step. It is the occasion where everything psychological and material about the system manifest together in one place and time. This confluence of human nature and natural law answers any and all questions about the necessity for and the value of the GYAT choice-making paradigm. When material dynamic models of the solution system are built, they don’t have to be logical or coherent to assemble. When the dynamic simulations are build from modular modeling software, they have to be coherent and logical or the program will not compile. Reality has no equivalent of the software compiler to keep a bucket of parts rational and compliant to formal logic. It picks up on garbage data input as well.

In the project setting, the word gets around that the system process simulator is robust and at the launchpad. When the final countdown begins, the room fills with key people from all parts of the project who have invited themselves to witness system startup and performance. The area where the simulationists and visitors are observing the output displays is quiet. Hour after hour, no one leaves the room.

The scene resembles the events in NASA missions where, like the flyby of Pluto, performance during the occasion is critical to success. Nothing in mission control moves while the Mars lander is executing its intricate steps to touchdown. Only after feedback from the equipment health check does the room get animated with mutual congratulations. “I knew it would work.” Yeah, sure.

It took repeated liftoff experience for us to decode the psychology displayed in this generic scenario. The project principals were in attendance for good reasons.

- System dynamics knowledge. Once reliable information about system performance becomes available, the primary project language shifts from statics to dynamics, and stays there. It forms a new valued class in the hierarchy.

- Significant variables determination. During the TFE statics phase, everyone develops opinions about which variables really matter to system performance, no two opinions the same. When the test program reveals the true key variables, impossible to know in advance, everyone has to eat a side order of crow. You learn the dependent variables where if one is forced to be constant, the system destabilizes.

- As the test program proceeds, knowledge of the key variables in hand, the principals get ideas for system performance improvement. When the ideas show promise, the simulator and its test agenda is expanded to accommodate them. When the results promise big jumps in productivity, changes are made to the system design by the principals.

More than once we have been stunned about department principals who fought off every outside suggestion for change for years, suddenly and quietly make big changes in their design happen. There is no discussion of budget and schedule. No red tape appears. The fix “appears” in the construction.

It is rare that any Plan A project ever gets to the dynamic simulation place holder before it sinks by GIGO. Just like Babbage, no simulation software tolerates garbage inputs.

The importance of knowing the significant factors in system dynamics cannot be over emphasized. You know we’re in the age of the earth called the Holocene. This period of little variation in earth habitat is when humans formed civilizations. As human population growth has destabilized that steady, nurturing habitat, scientists have proclaimed the current period the Anthropocene. While debates of all kinds about what to do about the destabilization rage on, the brutal fact is that the significant factors of human habitat have never been determined by dynamic simulation of the incredibly complicated earth “system.” Debates that are based on opinion and incomplete information are a colossal waste of time.

The dependency on guesswork feeds the authoritarians who simply pick out a single cause-effect factor at random and waste the world’s resources showing it doesn’t work. For humanity it means nothing less than extinction by ignorance.

Dynamic Simulation

Modeling the future is a must. No one can track the intertwined loops over time by human brainwork. Those who don’t model can’t possibly investigate the risks and instabilities of the future before commitment to construction. Opinions, no matter how many are gathered, are notoriously incompetent at projecting the future. More does not mean better. Experience only helps to build the model. No one can pick the significant variables. No one can chart the stability limits. Human brainpower alone cannot identify dynamic uncertainties. IA (intelligence amplification) is required (Ashby).

When modular modeling software first became available in 1984, it made dynamic simulation of systems affordable to the public. The advertising is explicit:

Each MMS™ module has been developed by experienced simulation engineers using “first principles;” that means each module has been formulated using fundamental governing laws. For example, our thermo-hydraulic modules conform to the first law of thermodynamics requiring the conservation of energy. As a result, MMS™ provides less experienced users the ability to develop high-fidelity dynamic simulations quickly and efficiently.

In addition, our more advanced users will appreciate that we provide theory documentation for each module, which contains the fundamental equations and assumptions for that module.

At the time its beneficial power was made affordable to anyone, we predicted it would go viral and become standard practice, especially in industry. Since it portended the death of GIGO, good choices would become commonplace at great benefit to society.

Our prediction was dead wrong. Today, dynamic simulation of systems is employed in less than 3% of the applications that would profit greatly from its use. Why?

Since the reason cannot be technical, it must be psychological/social. The answer to the riddle lies in what building a dynamic simulation does to the dysfunctional organization. It:

- Exposes the voids and errors in the system knowledge base, its garbage. Typically, more than half of the requisite information has to be assembled from scratch. Painstaking effort.

- If garbage information is introduced, instead, the program won’t compile.

- Its connection to natural law exposes errors in system design that are beyond dispute. The program will not violate the conservation laws for anyone.

Because of the perceived risk to social status brought on by exposing the truth, the dynamic simulation step is omitted. The upper hierarchy consumers prefer to shoulder the consequences of unnecessary errors over a successful project. As history teaches, the people getting hurt by this choice are never a factor.

Power is always potential. That is, when it is used it becomes something else, either force or authority. This is the respect which gives meaning, for example, to the concept of a “fleet in being” in naval strategy. A fleet in being represents power, even though it is never used. When it goes into action, of course, it is no longer power, but force. It is for this reason that the allies were willing to destroy the battleship Richelieu, berthed at Dakar, after the fall of France, at the price of courting the disfavor of the French. Why should the battleship, the mightiest engine of destruction afloat, require such care in assuring its protection with sufficient cruiser, destroyer, and air support? The answer is that a battleship is even more effective as a symbol of power than it is as an instrument of force. Elton Mayo (1943)

Putting mathematical physics to work

Take note of feedforward control as an integral part of GYAT. It’s a major payoff factor in safety, availability, performance, and productivity – and a stellar uncertainty reducer in the operational reality.

Feedforward control is distinctly different from open loop control and teleoperator systems. Feedforward control requires a mathematical model of the process and/or machine being controlled and the system’s relationship to any inputs or feedback. Neither open loop control nor teleoperator systems require the sophistication of a mathematical model of the system.

The benefits of feedforward control are significant and justify the extra time and effort required to implement the control technology. Control accuracy can often be improved by as much as an order of magnitude. Energy consumption by the feedforward control system and its actuators is substantially lower than with other controls. Stability is enhanced such that the controlled system can be built of lower cost, while still being highly accurate and able to operate at high speeds. Other benefits of feedforward control include reduced wear and tear on equipment, lower maintenance costs, higher reliability and a substantial reduction in hysteresis.

In physiology, feedforward control in human nature is exemplified by the normal anticipatory regulation of heartbeat in advance of actual physical exertion by the central autonomic network. Feedforward control can be likened to learned anticipatory responses to known cues (predictive coding). Feedforward systems are also found in biological control of other variables by many regions of animals brains.

In the case of biological feedforward systems, such as in the human brain, knowledge or a mental model of the plant (body) can be considered to be mathematical as the model is characterized by limits, rhythms, mechanics and patterns. A pure feed-forward system is different from a homeostatic control system, which has the function of keeping the body’s internal environment ‘steady’ or in a ‘prolonged steady state of readiness.’ The flyball governor is a member of feedback design.

The intuition zone

By now you have figured out for yourself that the process of building prudent choices is a bear, even in the best of circumstances. When the Plan A organization goes as far as dynamic validations prior to launch, it always does so because of outside force. Prudent choice-making as normal business is a Plan B phenomenon.

What about the low-significance issues, the great bulk of the decision-making world? No one will go to the trouble and expense to proving-ground every issue that requires human attention.

There is only one absolute requisite for making choices by intuition and precedent. The approach must include a tight feedback loop, what we have called Short-Cycle Run-Break and Fix (SCRBF) for fifty years. The Plan B strategy is to find out about the errors and omissions while the consequences are still little. When you arrange for SCRBF, the cost of poor performance will be small and short-lived. With SCRBF you will eventually ricochet off of your bad choices to a satisfactory choice.

Views: 178